Artificial intelligence (AI) and high-performance computing (HPC) workloads are transforming global data center infrastructure. As enterprises deploy generative AI, large language models, and advanced analytics platforms, demand for GPU-powered computing has increased dramatically.

To support this growth, hyperscalers, colocation providers, and emerging AI cloud platforms are rapidly expanding GPU infrastructure worldwide. New technologies such as CDU for AI GPU servers, liquid cooling deployments, and GPU-as-a-service models are becoming essential to support high-density AI workloads.

Understanding which operators are scaling GPUs the fastest and how they are building this infrastructure has become critical for enterprises, investors, and infrastructure vendors.

AI workloads require enormous parallel computing power. Training modern AI models often involves thousands of GPUs working together in high-density clusters.

This creates new infrastructure requirements, including:

• High-density GPU racks

• Liquid cooling infrastructure

• Massive power capacity

• Hyperscale AI campuses

According to insights tracked through BIS MarketIQ, operators are now building AI-ready data center campuses ranging from 250 MW to more than 1 GW to support GPU-dense deployments.

Many of these deployments rely on CDU for AI GPU servers, which allow liquid cooling systems to efficiently manage the heat generated by GPU clusters.

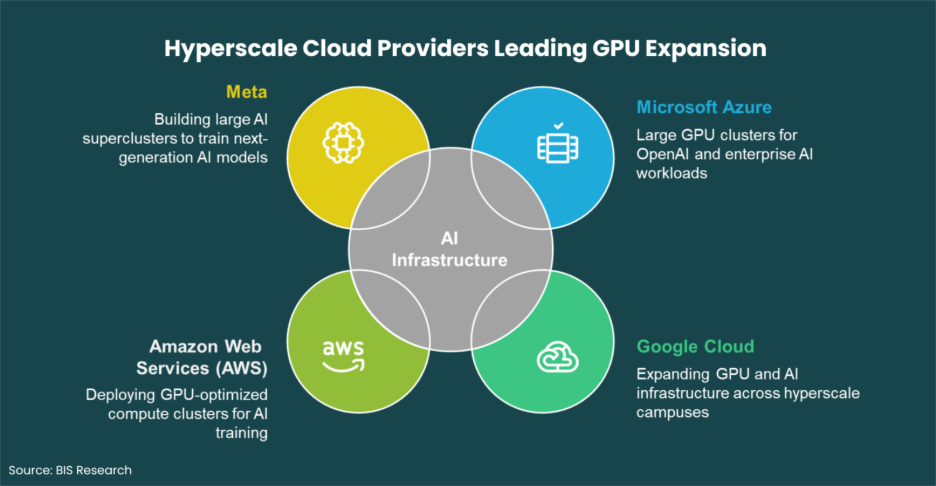

Hyperscale Cloud Providers Leading GPU Expansion

Hyperscale cloud providers are currently scaling the largest GPU infrastructure globally. These companies are building massive AI clusters to support generative AI services, enterprise AI platforms, and research workloads.

Operator | AI Infrastructure Strategy |

Microsoft Azure | Large GPU clusters for OpenAI and enterprise AI workloads |

Google Cloud | Expanding GPU and AI infrastructure across hyperscale campuses |

Amazon Web Services (AWS) | Deploying GPU-optimized compute clusters for AI training |

Meta | Building large AI superclusters to train next-generation AI models |

These deployments often require extremely high rack densities exceeding 100kW per rack. As a result, operators must carefully evaluate liquid cooling CDU pricing and infrastructure integration when designing AI-ready facilities.

Through platforms like BIS MarketIQ, stakeholders can track hyperscale expansions, including GPU configurations, cooling architectures, and new capacity coming online globally.

Explore the full intelligence dashboard and track global AI infrastructure expansion on the BIS MarketIQ platform: BIS MarketIQ

A new generation of AI-focused infrastructure providers often called Neocloud providers is rapidly scaling GPU capacity to serve AI startups and enterprises.

Company | Focus |

CoreWeave | GPU cloud platform optimized for AI workloads |

Lambda Labs | GPU infrastructure for AI training and inference |

Crusoe Energy | Sustainable AI infrastructure powered by energy reuse |

Nscale | European AI cloud infrastructure provider |

Enterprises seeking AI infrastructure frequently issue neocloud GPU colocation RFPs to secure GPU capacity for large-scale training workloads.

Many organizations also negotiate long-term GPU cloud provider contracts to guarantee access to GPU clusters. In addition, enterprises often evaluate CoreWeave alternative enterprise providers to diversify infrastructure risk.

Colocation operators are also investing heavily in GPU-ready infrastructure to support hyperscalers and enterprise AI deployments.

Operator | Expansion Strategy |

Digital Realty | Developing hyperscale AI campuses |

Equinix | Expanding AI-ready colocation infrastructure |

Vantage Data Centers | Deploying liquid-cooled GPU facilities |

QTS Data Centers | Building large AI infrastructure campuses |

STACK Infrastructure | Developing hyperscale GPU-ready data centers |

Many operators are upgrading older facilities by implementing CDU retrofit existing data center solutions to enable high-density GPU deployments.

In some cases, infrastructure operators are looking to buy CDU 1MW data center systems to support large GPU clusters and liquid cooling systems.

Need infrastructure intelligence or vendor insights for AI data center projects?

Request a customized quote from BIS Research experts

Another emerging trend in the AI infrastructure market is the conversion of cryptocurrency mining facilities into GPU data centers.

These projects include Bitcoin mining facility AI conversion initiatives, where mining infrastructure is repurposed to host GPU clusters for AI training.

Similarly, companies are exploring crypto mining site HPC lease agreements that allow AI operators to deploy high-performance computing infrastructure at former mining sites.

Because mining facilities already have significant power capacity, they can often be converted into AI infrastructure relatively quickly.

Not every organization can build its own AI data center. As a result, many enterprises are adopting GPU-as-a-service platforms offered by cloud providers and AI infrastructure companies.

When evaluating these platforms, companies often compare GPU as a service enterprise pricing models to determine the best option for AI workloads.

This approach allows enterprises to access GPU infrastructure without investing in their own data center facilities.

Tracking GPU infrastructure expansion across global markets can be complex. Many operators still rely on fragmented spreadsheets and disconnected tools, limiting real-time visibility into market developments.

BIS MarketIQ provides a unified intelligence platform that allows users to monitor global AI data center infrastructure, including:

• planned and operational data center campuses

• megawatt capacity expansion

• GPU density and configurations

• cooling architectures and infrastructure partners

By providing structured insights into AI infrastructure development, BIS MarketIQ helps operators, investors, and enterprises make faster and more informed strategic decisions.