The U.S. data center industry is entering a period of unprecedented infrastructure expansion. Between 2025 and 2027, operators are expected to deploy approximately 8.1 million GPUs, reflecting the rapid acceleration of artificial intelligence workloads and the growing importance of high-performance computing capacity.

This expansion represents more than a routine upgrade cycle. It signals a structural shift in how data center operators plan, finance, and integrate computing infrastructure to support the next generation of AI development. As organizations race to build scalable AI platforms, GPU procurement strategies are becoming tightly linked to long-term infrastructure planning and competitive positioning.

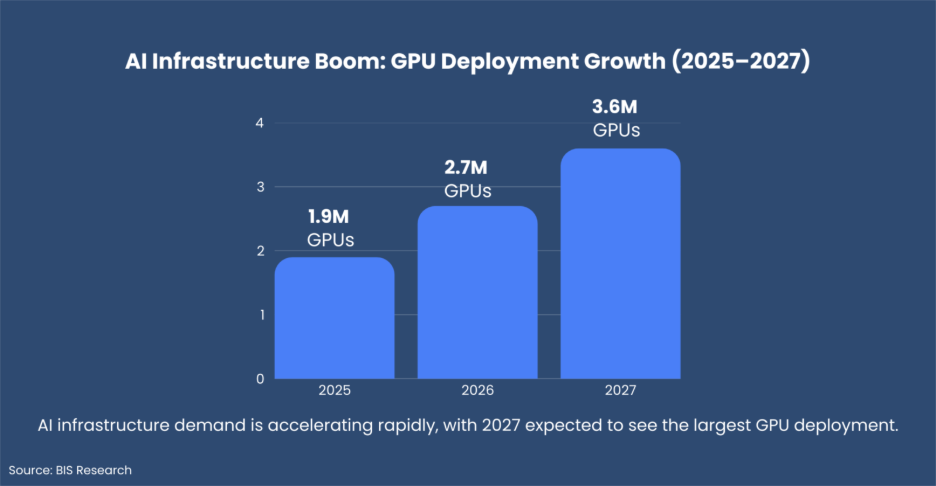

The projected deployment timeline illustrates how quickly demand is growing. Industry estimates suggest 1.9 million GPUs will be installed in 2025, followed by 2.7 million in 2026, before reaching 3.6 million units in 2027.

Nearly half of the entire three-year deployment volume will occur in 2027 alone. This surge highlights how aggressively operators are scaling their infrastructure to support advanced AI models and compute-intensive workloads.

For companies operating in the data center ecosystem, including infrastructure vendors, cooling technology providers, and power equipment suppliers, understanding this deployment curve is critical. The timing of these installations will influence supply chains, investment cycles, and infrastructure demand across the industry.

Explore BIS MarketIQ – Sign-up for a Free Trial

One of the most notable shifts in this cycle is the move away from short-term hardware purchasing. In the past, many operators procured GPUs in response to immediate demand fluctuations. That approach is becoming less viable as AI workloads grow in scale and complexity.

Today, buyers are securing multi-year GPU supply commitments and coordinating deliveries with new campus construction and major facility retrofits. This approach allows operators to reduce supply risks and ensure that computing capacity is available when needed for large AI training clusters.

Access to high-performance GPUs is now directly tied to an organization’s ability to compete in the AI economy. As a result, procurement certainty is often prioritized over marginal cost reductions.

Explore deeper insights into global AI infrastructure, hyperscale investments, and GPU deployment trends.

Visit BIS Market IQ to access data-driven intelligence and market analytics powering the future of AI infrastructure: BIS MarketIQ

Large cloud providers continue to anchor the majority of GPU demand. Their scale enables them to standardize procurement strategies, negotiate long-term supply agreements, and deploy hardware across multiple facilities simultaneously.

Among the major buyers, Amazon Web Services leads the market with approximately 2.5 million GPUs planned across the 2025–2027 cycle. Other hyperscale operators include:

• Meta with roughly 1.0 million GPUs

• Oracle with about 808,000 GPUs

• Google deploying around 793,000 GPUs

• Microsoft with approximately 726,000 GPUs

Collectively, these companies account for a large share of the projected deployments.

At the same time, the buyer landscape is expanding. AI-focused infrastructure providers are emerging as influential players in GPU procurement. Companies such as CoreWeave, with more than 600,000 GPUs expected, and projects like xAI’s Colossus 2, estimated at around 550,000 GPUs, demonstrate the rise of AI-native computing platforms.

These operators are building infrastructure designed specifically for high-density AI training environments rather than traditional cloud workloads.

As GPU clusters become larger and more powerful, infrastructure readiness has become a decisive factor in procurement planning.

Large installations, particularly those exceeding 10,000 GPUs per site, produce significant thermal loads and require far greater power density than conventional data center deployments. As a result, operators must ensure their facilities can support advanced cooling technologies and upgraded power systems.

Key considerations influencing GPU procurement now include:

• Liquid cooling system integration

• Power availability and substation upgrades

• High-density rack configurations

• Long-term scalability of facilities

Rather than purchasing GPUs independently, operators are designing integrated infrastructure ecosystems capable of supporting next-generation AI hardware.

The rapid growth of generative AI and large language models is fundamentally changing how data centers are designed. AI workloads require tightly interconnected GPU clusters, high-speed networking, and optimized thermal management.

To support these demands, operators are building AI-centric data center campuses engineered for high-density computing and efficient energy usage.

GPU deployment decisions are increasingly aligned with internal AI development roadmaps. Infrastructure capacity now plays a direct role in determining how quickly organizations can train models, release new AI products, and scale their services.

The projected 3.6 million GPU installations in 2027 highlight how critical AI-driven computing has become for cloud providers and AI companies alike.

Power availability is emerging as one of the most important factors shaping new data center development. In several established data center regions, grid constraints and regulatory limitations are slowing expansion.

As a result, operators are exploring locations with stronger energy infrastructure, scalable grid capacity, and stable regulatory environments. Long-term power purchase agreements are becoming a core component of AI infrastructure strategy.

This geographic diversification is expected to drive increased demand for cooling technologies, power distribution equipment, and facility construction in emerging data center markets.

Explore BIS Market IQ

With more than sixty operators participating in the upcoming GPU deployment cycle, procurement intelligence is becoming increasingly valuable.

Understanding which operators are expanding most aggressively, when installation peaks will occur, and how cluster density will influence cooling and power demand can significantly affect partnership strategies and revenue planning.

For vendors and investors, identifying high-intensity buyers and aligning with their infrastructure timelines can create significant opportunities.

Explore BIS MarketIQ – Sign-up for a Free Trial

The deployment of 8.1 million GPUs across U.S. data centers between 2025 and 2027 represents one of the largest computing infrastructure expansions in recent history.

This cycle marks the construction phase of the AI backbone that will power future innovation across industries. Companies that align with buyer priorities such as long-term capacity planning, integrated infrastructure design, AI-optimized cluster architecture, and energy resilience will be best positioned to capture value during this transformation.